With its latest feature, Instagram says it’s aiming to give users more control over what it considers “sensitive content.”

While Instagram has always implemented rules regarding what kind of content can be highlighted or even exist on the platform, including by way of its Community Guidelines and Recommendation Guidelines, the new feature hones in on “sensitive content.”

By Instagram’s definition, “sensitive content” can include posts that “don’t necessarily” break site rules but could still “potentially be upsetting” to some people. Examples given in a press release this week include posts that could be considered sexually suggestive or violent.

“We believe people should be able to shape Instagram into the experience that they want,” a rep for the Facebook-owned social media service said Tuesday. “We’ve started to move in this direction with tools like the ability to turn off comments, or restrict someone from interacting with you on Instagram. Today we’re taking another step and launching what we call ‘Sensitive Content Control,’ which allows you to decide how much sensitive content shows up in Explore.”

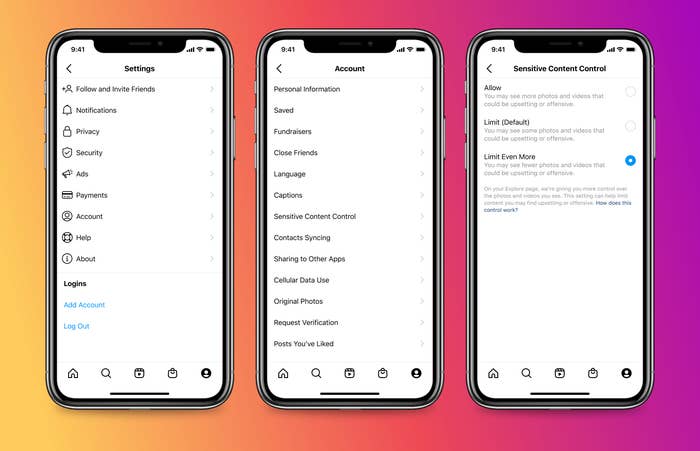

Users will be able to decide whether to leave things as they are on the site or adjust their Sensitive Content Control settings, which would see the inclusion of more or less—depending on the user—“sensitive content” on a person’s Explore page. Accessing the Sensitive Content Control feature is as simple as tapping the Settings menu in the upper right corner, tapping Account, and then tapping Sensitive Content Control.

From there, you can place the feature at its default setting which limits some content. You can also select the Limit Even More option, which will result in a user seeing increasingly less of certain types of content deemed “sensitive,” or the Allow option. The latter, however, will only be selectable for Instagram users who are 18 years of age or older.

The Sensitive Content Control rollout comes roughly a week after the announcement of Instagram’s Security Checkup, a new feature that aims to increase security for account holders.